The containerization of the IT world continues. What started with virtualization in the early 2000s has reached with Docker a state, where it’s again a hype topic.

Therefor it’s natural that people also started to play with AEM in docker (https://adapt.to/2016/en/schedule/running-aem-in-docker.html, https://www.linkedin.com/pulse/running-aem-docker-satendra-singh and many more).

Of course I was challenged with the requirement to run AEM in docker too. Customers and partners asking how to run AEM in docker. If I can provide dockerfiles etc. I am hestitating to do it, because for me docker and AEM are not a really good fit (right now with AEM 6.3 in 2017).

Some background first: Docker containers should be stateless. Only if the application within the container does not hold any persistent state, you can shut it down (which means deleting all the files created by the application in the container itself), start it up, replace it by a different container holding a new version of the application etc. The whole idea is to make the persistent state somebody else’s problem (typically a database). Deployments should be as easy as starting new docker instances (from a pre-tested and validated docker images) and shutting down the old ones. Not working and testing in production anymore.

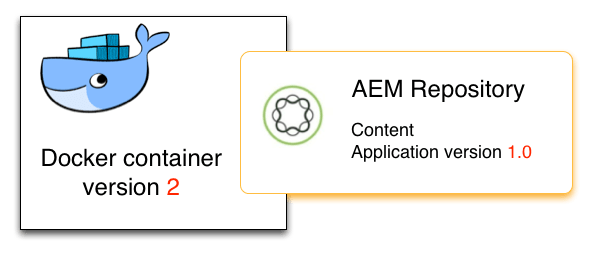

So, how does that collide with AEM? AEM is not only an application, but the application is closely tied with a repository, which holds state. Typically the application is stored within the repository, next to the “user data” (= content). This means, that you cannot just replace an AEM instance inside docker by a new instance without loosing this content (or resetting it to a state, which is shipped with the docker image). Loosing content is of course not acceptable.

So the typical docker rollout approach of new application versions (bringing new instances live based on a new docker image and shutting down the old ones) does not work with AEM; the content sitting in the repository is the problem.

People then came up with the idea, that the repository can stored outside of the docker image, so isn’t lost on restart/replacement of the image. Docker calls this “host directory as data volume” (https://docs.docker.com/engine/tutorials/dockervolumes/#locate-a-volume).

That idea sounds neat and of course it works. But then we have a different problem. When you start a new docker image and you mount this data volume containing the repository state, your AEM still runs the “old” version of your application. Starting the repository from a different docker image doesn’t bring any benefit then.

When you want to update your AEM application inside the repository, you would still need to perform an installation of your application into a running repository. Working in a production environment. And that’s not the idea why you want to use docker.

With docker we just wanted to start the new images and to stop the old ones.

Therefor I do not recommend to use docker with AEM; there is rarely a value for it, but it makes the setup more complicated without any real benefit.

The only exceptions I would accept are really short-lived instances, where hosting the repository inside the docker system isn’t a problem and purging the repo on shutdown is even a feature. Typically these are short-lived development instances (e.g. triggered by Continous integration pipeline, where you automatically create dedicated docker instances for feature branches). But that’s it.

And as a sidenote: This does not only affect TarMK-based AEM instances. If you have mongo-based instances, the application is also stored within the (Mongo-) repo. Just running AEM in a new docker image doesn’t update the application magically.

To repeat myself: This considers the current state. I know that the AEM engineering is perfectly aware of this fact, and I am sure that they try to adress it. Let’s wait for the future 🙂

You must be logged in to post a comment.