It must have been 2007 when I have seen that urls with a query string “utm_id=someHexCode” in the logs of the Communiqe system I ran at that time. I still remember that we had about 4’000 of them on any given day, which was not that much of a problem. But I didn’t know back then, that we will still deal with the very same requests more than 15 years later, but with an even higher rate and with more severe consequences.

What is special with these query strings? The most important thing forbackend folks is that these query strings are a frontend topic. They are used to attribute requests to a certain source, which is important for the Analytics folks to track the effectiveness for their campaigns.

For example when there is this query string “utm_id=cm2026-35-1” it could be the code of “email blast 1 of campaign 35 in 2026”. If a user clicks on that link in an email, the analytics code in the page will read this query string and report it to the analytics server. And this then allows to track the conversion rate or efficiency of this particular email blast and compare it to the results of a Facebook ad or other sources.

So this special type of traffic has 2 aspects which are important for backend folks like me:

- It typically happens in spikes: Right after the distribution of these emails (either via ads, emails or whatever other way of distributing it) users will click it.

- These query srings have a meaning only on the frontend side, but on the backend these parameters are not used at all.

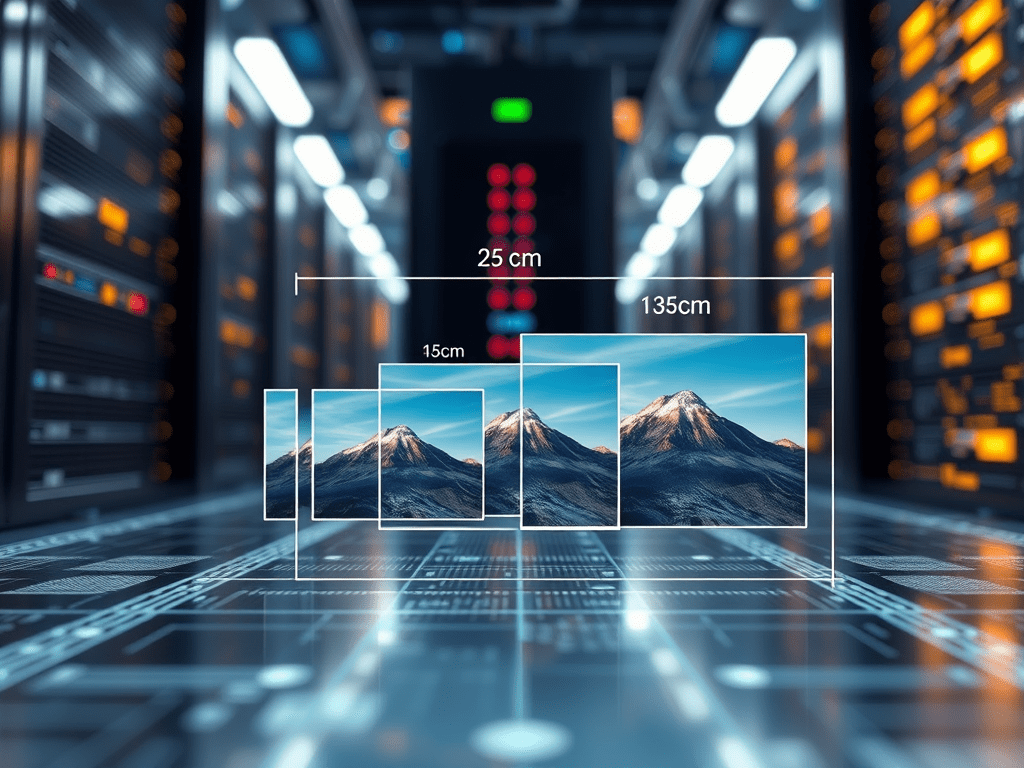

But as most caches don’t cache any response where the query string contains a query string, such requests bypass all CDN caches by default. That means that such requests end up very frequently on the AEM publish instance for rendering, while from a backend perspective all of the following requests will produce the same results:

/content.html(the response of this request could be cached)/content.html?utm_id=campaign1(non-cacheable)/content.html?utm_id=campaign25(non-cacheable)

And because these requests happen frequently in spikes, this often leads to situations that such a campaign triggers an overload of the AEM Publish layer. Which is sad, because your expensive and successful marketing campaign is responsible for a server-side outage, and instead of a great experience you serve your customers a slow site and/or errors.

Unfortunately I see too many of those situations.

What is the AEM answer to it?

The general idea to handle this situation is to strip off these campaign parameters from the request, which makes turns them into requests for which the response can be served from a cache, where the usual caching and expiration rules are applied. Where and how this can be done is depends.

On AEM CS the best way to handle this directly on the CDN (using Traffic Rules to normalize requests); this is the best solution, because any campaign traffic is served directly from the CDN, and it’s not bothering origin (that means the dispatcher and the publish instances). If you are on AEM CS you should use this approach.

A concept which is can be implemented on any AEM setup is to implement it on the dispatcher. With the /ignureUrlParams command you specifiy the parameters which should be stripped from the request. If there is no query string left, the request is considered cacheable, and it’s checked against the usual dispatcher rules.

But in every case you need to be able to identify the query strings which you know will be used in the context of your AEM application. If you know them, you also know that you can ignore everything else. Configure them into the traffic rules or the /ignoreUrlParams section.

Every AEM instance should have this configured in order to handle such traffic spikes.

You must be logged in to post a comment.